Startup Customer Validation: Interview Questions That Actually Work

Most founders approach customer interviews as a sales pitch in disguise. They walk into the room with a vision, seek validation, and (unsurprising to anyone familiar with cognitive psychology) they find it.

They leave the conversation feeling "ready," only to discover months later that their "validated" customers won't actually open their wallets.

The problem isn't the customer. The problem is the methodology.

In the Startup Readiness Framework, the customer interview is a diagnostic tool, not a promotional one. If you aren't hearing things that challenge your core assumptions, you aren't interviewing; you are evangelizing.

To move from a "good idea" to a "validated startup," you must master the art of extracting truth from people who are socially programmed to lie to you.

Stop asking hypotheticals. Start asking these.

TL;DR: Customer Interviews Are Not for Validation. They’re for Evidence.

Most founders don’t fail at customer interviews because they lack effort. They fail because they use the wrong method.

Customers will tell you what you want to hear. Not because they’re dishonest, but because of social pressure, cognitive bias, and their own inability to predict future behavior.

If you rely on opinions, hypotheticals, or compliments, you will walk away with false validation.

Real validation comes from evidence, not intent.

To extract that evidence:

Ask about past behavior, not future intent: What people have done is infinitely more reliable than what they say they’ll do.

Focus on the problem, not your solution: If you’re talking more than 20% of the time, you’re pitching, not learning.

Look for existing workarounds: If customers are already hacking together solutions, the problem is real. If they’re doing nothing, it’s not a priority.

Separate signal from noise: Compliments, vague interest, and “send me a deck” are noise. Specific problems, measurable consequences, and current spend are signal.

Run enough interviews to see patterns: Fewer than 10 conversations is anecdotal. Patterns (not opinions) create validation.

If your interviews don’t challenge your assumptions, you’re not discovering the truth. You’re reinforcing your bias.

The goal is not to prove your idea is right. The goal is to uncover whether a real, costly, and urgent problem actually exists.

The Cognitive Bias Trap: Why Your Customers Lie

In my research on entrepreneurial learning and decision making, I've found that founders are particularly susceptible to Confirmation Bias: the tendency to search for, interpret, and recall information in a way that confirms one's prior beliefs.

When you ask "Would you use an app that does X?", you are triggering several social and cognitive responses simultaneously:

The Social Desirability Bias: Humans are wired to be helpful and avoid conflict. It is cognitively easier for a potential customer to say "Yes, that sounds interesting" than to tell a passionate founder their idea doesn't solve a real problem.

The Projection Bias: Customers are unreliable predictors of their own future behavior. They genuinely believe they will be more organized, more productive, or more disciplined next month, which is why stated intent and actual behavior almost never match.

The Compliment Trap: Enthusiasm is not evidence. When a customer says "I love this idea," they are telling you how the conversation made them feel, not whether they would pay to solve the problem. Compliments are the most expensive form of false validation because they feel like progress while producing none.

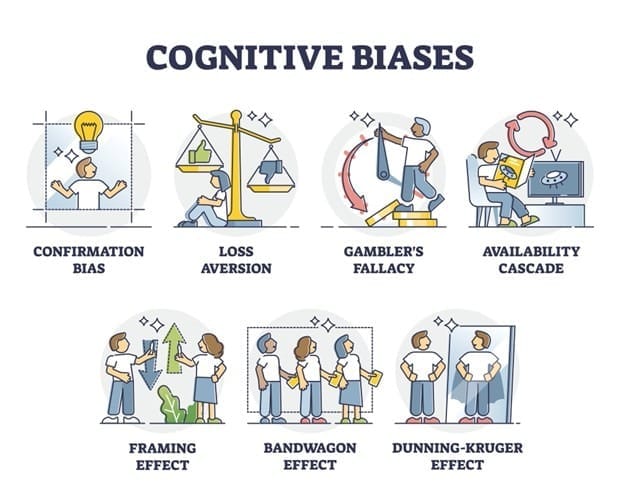

The Cognitive Biases Sabotaging Your Validation

The human brain is a meaning-making machine that prioritizes internal consistency over external reality. During a customer interview, these additional six biases also act as "noise" that drowns out the "signal" of true market demand.

1. Loss Aversion: The Fear of the Pivot

Loss aversion is the tendency to feel the pain of losing something approximately twice as strongly as the pleasure of gaining something equivalent. It was first documented by psychologists Daniel Kahneman and Amos Tversky and is one of the most consistently replicated findings in behavioral economics.

The Impact: You ignore negative feedback because accepting it means "losing" the progress you've made, even if that progress is heading in the wrong direction. For founders, this often looks like doubling down on a failing feature rather than pivoting toward what the customer actually needs.

2. The Gambler’s Fallacy: The "Any Day Now" Trap

The Gambler's Fallacy is the mistaken belief that past random events influence the probability of future ones (that a string of losses makes a win more likely). In reality, each new customer conversation is an independent data point, not part of a sequence moving toward an inevitable outcome.

The Impact: Founders often think, "I've had 20 'No's' in a row, so the 'Yes' must be coming soon." In reality, 20 "No's" is a data set telling you that your Problem Clarity is fundamentally broken. The market is not withholding a "Yes" — it is giving you a consistent answer you haven't yet accepted.

3. Availability Cascade: The Echo Chamber Effect

An availability cascade is a self-reinforcing cycle in which a belief becomes more credible the more it is repeated, regardless of whether the underlying evidence has changed. The more a narrative dominates a feed, a conference, or a peer group, the more it feels like market truth.

The Impact: Because everyone on LinkedIn is talking about "AI-driven productivity," you assume every customer actually wants it. You mistake hype for a validated customer need, and you build a solution to a problem your customer has never actually tried to solve.

4. The Framing Effect: Leading the Witness

The framing effect is the phenomenon where people respond differently to the same information depending on how it is presented. In a customer interview, the specific words in your question are not neutral — they are instructions telling the respondent which direction to lean.

The Impact: Asking "Would you use this to save time?" frames the answer toward "Yes." Asking "Walk me through your most time-consuming task" frames the conversation toward reality. The first question validates your assumption; the second one tests it.

5. The Bandwagon Effect: The Validation Illusion

The bandwagon effect is the tendency for individuals to adopt a belief or behavior because others around them appear to hold it. In group interview settings, this dynamic can collapse an entire room of independent opinions into a single manufactured consensus within minutes.

The Impact: If you interview a group or a committee, one dominant voice can sway the others. You leave thinking the whole room agreed with you, when in reality they simply succumbed to the group dynamic. This is why one-on-one interviews almost always produce more honest signal than panels or focus groups.

6. The Dunning-Kruger Effect: The "Expert" Blindspot

The Dunning-Kruger effect is a cognitive pattern in which people with limited knowledge in a domain overestimate their competence in that domain. The less someone knows, the less equipped they are to recognize the boundaries of what they don't know.

The Impact: Novice founders often think they "understand the market" after only three interviews. They lack the meta-cognition to realize how much they don't know, leading to premature scaling and avoidable failure. In the Startup Readiness Framework, this is one of the most common reasons a founder's self-assessed Problem Clarity score diverges sharply from their evidence-based score.

Why These Biases Impact Your Startup Readiness

These biases don't just affect your mood, they corrupt your Problem Clarity.

A customer interview is a structured conversation designed to understand a customer’s past behavior, problems, and workarounds. Not to validate a solution idea.

However, when your interview data is filtered through these cognitive traps, you end up with a "false positive" for readiness.

You think you have validated a problem, but you’ve actually just validated your own internal narrative.

As a founder, your job is to be an intellectual detective, not a cheerleader. Moving from a biased conversation to an evidence-based diagnosis is what separates the founders who scale from the ones who merely "stay busy."

The Three Pillars of Truth-Extraction

To bypass these biases, your interview process must be rooted in three non-negotiable rules:

1. The "Past Behavior" Rule

The best predictor of future behavior is not a stated intention; it is past action.

Ineffective: "How much would you pay for a solution to this?" (Hypothetical)

Effective: "How much did you spend the last time you tried to fix this? How much time? How many man-hours? How much money?" (Evidence-based)

2. The "Problem-to-Solution" Ratio

A successful validation interview should be 90% about the problem and 10% (at most) about your solution.

If you find yourself talking more than 20% of the time, you have transitioned from a researcher to a salesman.

You are there to map the "Problem Space": the specific constraints, frustrations, and failed workarounds the customer currently lives with.

3. The "Workaround" Audit

The strongest signal of Startup Readiness is the existence of a manual, clunky workaround.

If a customer is currently using a complex Excel sheet, a physical notebook, or a "hacky" combination of three different apps to solve a problem, you have found a vein of gold.

If they aren't doing anything to solve it, the "pain" is likely an inconvenience, not a priority.

Best Customer Interview Questions for Validation

The following customer interview questions are designed to replace leading, hypothetical prompts with diagnostic anchors that bypass the customer's 'Politeness Filter' and surface evidence of real behavior.

The Goal | Avoid | Use |

Detecting Pain | "Is [X] a problem for you?" | "Walk me through the last time [X] happened. What went wrong?" |

Measuring Stakes | "Would you pay to fix this?" | "How does this problem affect your bottom line or your team's time?" |

Finding the 'Who' | "Who else should I talk to?" | "Who else in your organization gets a headache when this happens?" |

Testing Urgency | "Is this a priority for you?" | "What are you currently doing to solve this? Why hasn't that worked?" |

Isolating Friction | "Is our UI easy to use?" | "Show me how you do this task today. (Watch them struggle)." |

Interpreting the Data: Signal vs. Noise

Most founders leave customer interviews with a full notebook and the wrong conclusions. The problem is not a lack of data. It is the inability to distinguish genuine market signal from socially polite noise.

Noise is what people say when they want to be helpful without committing to anything. Signal is what people reveal when they describe their actual behavior.

Here is how to tell the difference in practice.

Noise: "This sounds like a great idea. Send me a deck when you're further along."

Signal: "I don't actually care about Feature A, but if you could solve Feature B, I'd pay you right now. My boss is breathing down my neck about it."

The first response is a rejection wrapped in encouragement. The second response contains a budget, a decision-maker, a specific problem, and a timeline. Those four elements together are what a validated customer need actually looks like.

Noise: "We've looked at a few tools for this. Nothing has quite fit yet."

Signal: "We built our own spreadsheet to handle it. Three people update it manually every Monday. It breaks constantly and we hate it, but we haven't found anything better."

Vague dissatisfaction with the market is not validation. A manual, brittle workaround that a team maintains at real cost is.

The workaround tells you the pain is high enough to justify ongoing effort, which is exactly the threshold your solution needs to clear.

Noise: "Yeah, our team struggles with communication. It's something we'd like to improve."

Signal: "We've tried Slack, then Teams, then a project management tool. We're back to email because nothing stuck. Our last product launch was delayed two weeks because of a miscommunication between engineering and marketing."

The first statement describes a preference. The second describes a documented failure with a measurable consequence.

Consequences (lost time, lost money, delayed launches, damaged relationships) are the currency of real problem validation. If a customer cannot point to a specific consequence, the problem is likely not a priority.

The pattern across all three is the same. Noise is general, future-facing, and costless to say. Signal is specific, rooted in past behavior, and accompanied by evidence of real stakes.

From Interview to Action: Your Next Five Steps

You now have a methodology. The question is whether you have the discipline to use it before you build.

Here is exactly what to do next.

Schedule ten one-on-one interviews. Not group sessions, not surveys, not LinkedIn polls. One-on-one conversations with people who represent your target customer, conducted using the diagnostic questions in this article. Ten is the minimum threshold for pattern recognition. Fewer than that and you are working with anecdote, not evidence.

Record and transcribe every session. Memory is not a reliable research tool — it is subject to every bias this article describes. Use a transcription tool so you can read the conversation after the fact, when your confirmation bias has cooled down. The phrases you missed in the room will appear on the page.

Audit for workarounds. After each interview, ask one question: is this person currently doing something (anything) to solve this problem on their own? A workaround is the single strongest indicator that a problem is real, recurring, and worth solving. If you find zero workarounds across ten interviews, you do not yet have a problem worth building for.

Separate behavior from intention. Go back through your notes and mark every statement that describes what a customer has done versus what they would do. Past behavior counts. Future intention does not. If your validation evidence is built primarily on "I would use that" and "I'd probably pay for something like this," you are not validated. You are liked.

Map your findings to your Problem Clarity pillar. Every piece of signal you collect either strengthens or weakens your Problem Clarity. If you can articulate the specific problem, identify who experiences it most acutely, quantify the cost of the current workaround, and name the reason existing solutions have failed, then and only then have you earned the right to move to solution design. If you cannot do all four, you have your next interview agenda.

The founders who build companies that last do not skip this work. They do it rigorously, they do it repeatedly, and they treat every "No" as instruction rather than rejection. That is not pessimism. That is how you build something the market was already waiting for.

Problem Clarity is one of six pillars in the Startup Readiness Framework. If your customer discovery is solid, the next question is whether the rest of your startup is as ready as your evidence. The Startup Readiness Assessment gives you a full-system diagnostic across all six pillars in under fifteen minutes.

Assess your readiness by taking the Startup Readiness Score free today →

Published

By Dr. Shaun P. Digan

Originally Published on the Startup.Ready.’s Startup Readiness: Validation, Framework, and Tools Blog at: https://www.startupreadinessscore.com/startup-readiness

Original Publication Date: April 15, 2026

Last Updated: April 15, 2026

About the Author

Dr. Shaun P. Digan is the founder of Startup.Ready and the creator of the Startup Readiness Framework, a research-based system for evaluating and validating early-stage startups before launch and early growth. He holds a PhD in Entrepreneurship from the University of Louisville and has spent over 15 years teaching, advising, and consulting with founders on startup strategy, validation, and growth.

In his writing, including The Foundations of Innovation, he focuses on how founders can make better decisions by improving clarity, alignment, and readiness before scaling.